For the first time in telecommunications history there will be a world-wide, uniform and seamless transmission standard for service delivery. Synchronous digital hierarchy (SDH) provides the capability to send data at multi-gigabit rates over today's single-mode fibre-optics links. This first issue of Technology Watch looks at synchronous digital transmission and evaluates its potential impact. Following issues of TW will look at customer oriented broad-band services that will ride on the back of SDH deployment by PTOs. These will include:

- Frame relay

- SMDS (Switched Multi-Megabit Data Service)

- ATM (asynchronous transfer mode)

- High speed LAN services such as FDDI

Figure 1 - The Relationship Between Services

Overview

The use of synchronous digital transmission by PTOs in their backbone fibre-optic and radio network will put in place the enabling technology that will support many new broad-band data services demanded by the new breed of computer user. However, the deployment of synchronous digital transmission is not only concerned with the provision of high-speed gigabit networks. It has as much to do with simplifying access to links and with bringing the full benefits of software control in the form of flexibility and introduction of network management.In many respects, the benefits to the PTO will be the same as those brought to the electronics industry when hard wired logic was replaced by the microprocessor. As with that revolution, synchronous digital transmission will not take hold overnight, but deployment will be spread over a decade, with the technology first appearing on new backbone links. The first to feel the benefits will be the PTOs themselves, as demonstrated by the technology's early uptake by many operators including BT. Only later will customers directly benefit with the introduction of new services such as connectionless LAN-to-LAN transmission capability.

According to one market research company it will take until the mid or late 1990s before 70% of revenue for network equipment manufacturers will be derived from synchronous systems. Remembering that this is a multi-billion $ market, this constitutes a radical change by any standard (Figure 2).

Users who extensively use PCs and workstations with LANs, graphic layout, CAD and remote database applications are now looking to the telecommunication service suppliers to provide the means of interlinking these now powerful machines at data rates commensurable with those achieved by their own in-house LANs. They also want to be able to transfer information to other metropolitan and international sites as easily and as quickly as they can to a colleague sitting at the next desk.

Figure 2 - European Revenue Growth of Transmission Equipment

Plesiochronous Transmission.

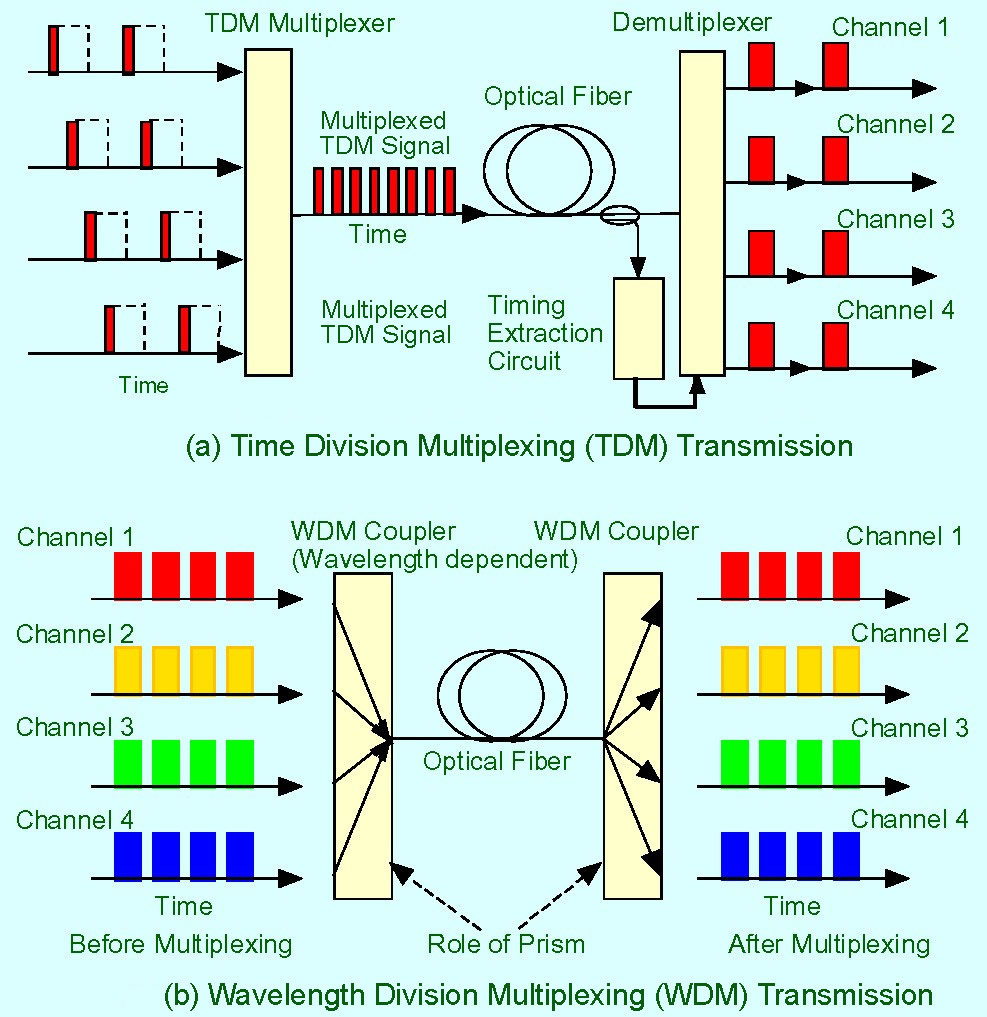

Digital data and voice transmission is based on a 2.048Mbit/s bearer consisting of 30 time division multiplexed (TDM) voice channels, each running at 64Kbps (known as E1 and described by the CCITT G.703 specification). At the E1 level, timing is controlled to an accuracy of 1 in 1011 by synchronising to a master Caesium clock. Increasing traffic over the past decade has demanded that more and more of these basic E1 bearers be multiplexed together to provide increased capacity. During this time rates have increased through 8, 34, and 140Mbit/s. The highest capacity commonly encountered today for inter-city fibre optic links is 565Mbit/s, with each link carrying 7,680 base channels, and now even this is insufficient.Unlike E1 2.048Mbit/s bearers, higher rate bearers in the hierarchy are operated plesiochronously, with tolerances on an absolute bit-rate ranging from 30ppm (parts per million) at 8Mbit/s to 15ppm at 140Mbit/s. Multiplexing such bearers (known as tributaries in SDH speak) to a higher aggregate rate (e.g. 4 x 8Mbit/s to 1 x 34Mbit/s) requires the padding of each tributary by adding bits such that their combined rate together with the addition of control bits matches the final aggregate rate. Plesiochronous transmission is now often referred to as plesiochronous digital hierarchy (PDH).

Figure 3 - A typical Plesiochronous Drop & Insert

Because of the large investment in earlier generations of plesiochronous transmission equipment, each step increase in capacity has necessitated maintaining compatibility with what was already installed by adding yet another layer of multiplexing. This has created the situation where each data link has a rigid physical and electrical multiplexing hierarchy at either end. Once multiplexed, there is no simple way an individual E1 bearer can be identified in a PDH hierarchy, let alone extracted, without fully demultiplexing down to the E1 level again as shown in Figure 3. The limitations of PDS multiplexing are:

- A hierarchy of multiplexers at either end of the link can lead to reduced reliability and resilience, minimum flexibility, long reconfiguration turn-around times, large equipment volume, and high capital-equipment and maintenance costs.

- PDH links are generally limited to point-to-point configurations with full demultiplexing at each switching or cross connect node.

- Incompatibilities at the optical interfaces of two different suppliers can cause major system integration problems.

- To add or drop an individual channel or add a lower rate branch to a backbone link a complete hierarchy of MUXs is required as shown in figure 3.

- Because of these limitations of PDH, the introduction of an acceptable world-wide synchronous transmission standard called SDH is welcomed by all.

Synchronous Transmission

In the USA in the early 1980s, it was clear that a new standard was required to overcome the limitations presented by PDH networks, so the ANSI (American National Standards Institute) SONET (synchronous optical network) standard was born in 1984. By 1988, collaboration between ANSI and CCITT produced an international standard, a superset of SONET, called synchronous digital hierarchy (SDH).US SONET standards are based on STS-1 (synchronous transport signal) equivalent to 51.84Mbit/s. When encoded and modulated onto a fibre optic carrier STS-1 is known as OC-1. This particular rate was chosen to accommodate a US T-3 plesiochronous payload to maintain backwards compatibility with PDH. Higher data rates are multiples of this up to STS-48, which is 2,488Gbit/s.

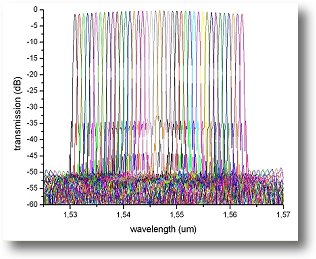

SDH is based on an STM-1 (155.52Mbit/s) rate, which is identical to the SONET STS-3 rate. Some higher bearer rates coincide with SONET rates such as: STS-12 and STM-4 = 622Mbit/s, and STS-48 and STM-16 = 2.488Gbit/s. Mercury is currently trialing STM-1 and STM-16 rate equipment.

SDH supports the transmission of all PDH payloads, other than 8Mbit/s, and ATM, SMDS and MAN data. Most importantly, because each type of payload is transmitted in containers synchronous with the STM-1 frame, selected payloads may be inserted or extracted from the STM-1 or STM-N aggregate without the need to fully hierarchically de-multiplex as with PDH systems.

Further, all SDH equipment is software controlled, even down to the individual chip, allowing centralised management of the network configuration, and largely obviates the need for plugs and sockets. A future SDH network could look like Figure 4.

Figure 4- An Example Future SDH Digital Network

Benefits of SDH Transmission

SDH transmission systems have many benefits over PDH:- Software Control allows extensive use of intelligent network management software for high flexibility, fast and easy re-configurability, and efficient network management.

- Survivability. With SDH, ring networks become practicable and their use enables automatic reconfiguration and traffic rerouting when a link is damaged. End-to-end monitoring will allow full management and maintenance of the whole network.

- Efficient drop and insert. SDH allows simple and efficient cross-connect without full hierarchical multiplexing or de-multiplexing. A single E1 2.048Mbit/s tail can be dropped or inserted with relative ease even on Gbit/s links.

- Standardisation enables the interconnection of equipment from different suppliers through support of common digital and optical standards and interfaces.

- Robustness and resilience of installed networks is increased.

- Equipment size and operating costs are reduced by removing the need for banks of multiplexers and de-multiplexers. Follow-on maintenance costs are also reduced.

- Backwards compatibly will enable SDH links to support PDH traffic.

- Future proof. SDH forms the basis, in partnership with ATM (asynchronous transfer mode), of broad-band transmission, otherwise known as B-ISDN or the precursor of this service in the form of Switched Multimegabit Data Service, (SMDS).

Conclusions

The introduction of synchronous digital transmission in the form of SDH will eventually revolutionise all aspects of public data communication from individual leased lines through to trunk networks. Because of the state-of-the-art nature of SDH and SONET technology, there are extensive field trials taking place in 1992 throughout the world prior to introduction in the 1993 - 1995 time scale.There is still a lack of understanding of the ramifications of the introduction of SDH within telecommunications operations. In practice, the use of extensive software control will impact positively all parts of the business. It is not so much a question of whether the technology will be taken up, but when.

Introduction of SDH will lead to the availability of many new broad-band data services providing users with increased flexibility. It is in this area where confusion reigns with potential technologies vying for supremacy. These will be discussed in future issues of Technology Watch.

Importantly for PTOs, SDH will bring about more competition between equipment suppliers designing essentially to a common standard. One practical effect could be to force equipment prices down, brought about by the larger volumes engendered by access to world rather than local markets. At least one manufacturer is currently stating that they will be spending up to 80% of their SDH development budgets on management software rather than hardware. Such was the situation in the computer industry in the early 1980s. Not least, it will have a great impact on such issues as staffing levels and required personal skills of personnel within PTOs.

SDH deployment will take a great deal of investment and effort since it replaces the very infrastructure of the world's core communications networks. But it must not be forgotten that there are still many issues to be resolved.

The benefits to be gained in terms of improving operator profitability, and helping them to compete in the new markets of the 1990s, are so high that deployment of SDH is just a question of time.

Hernandez Caballero Indiana M. CI: 15.242.745

Asignatura: SCO

Fuente:http://images.google.co.ve/imgres?imgurl=http://www.gare.co.uk/technology_watch/images/sdh4.gif&imgrefurl=http://www.gare.co.uk/technology_watch/sdh.htm&usg=__GIHKzmjHZisMDOScJFUIx5DQWgk=&h=277&w=421&sz=7&hl=es&start=2&um=1&itbs=1&tbnid=12nRG6Iu8VZUCM:&tbnh=82&tbnw=125&prev=/images%3Fq%3DSDH%26um%3D1%26hl%3Des%26client%3Dfirefox-a%26rls%3Dorg.mozilla:es-ES:official%26channel%3Ds%26tbs%3Disch:1